TL;DR: The OG Score is a community rating calculated from independent social audits submitted by verified Crypto OG. It assesses six metrics: Team, Security, Community, Innovation, Tokenomics, and Roadmap. Unlike code audits, it focuses on off-code risks such as intent signals, incentive alignment, governance quality, and economic sustainability. Reviews are permanent, attributed to the reviewer, and used to compute a public consensus score.

At a Glance: The OG Score Explained

What is the OG Score? OG Score is the proprietary trust rating powered by the OGAudit Social Audit Methodology.

Who creates it? Verified Crypto OG, independent Crypto Social Auditors from the OGAudit community.

How is it calculated? OGs rate the six metrics that make up the OG Score.

How is it updated? It updates dynamically whenever an OG conducts a social audit and submits a review.

What does it measure? Human risk and intent signals, product market fit and economic sustainability, and the actual performance.

How the OG Score is Calculated

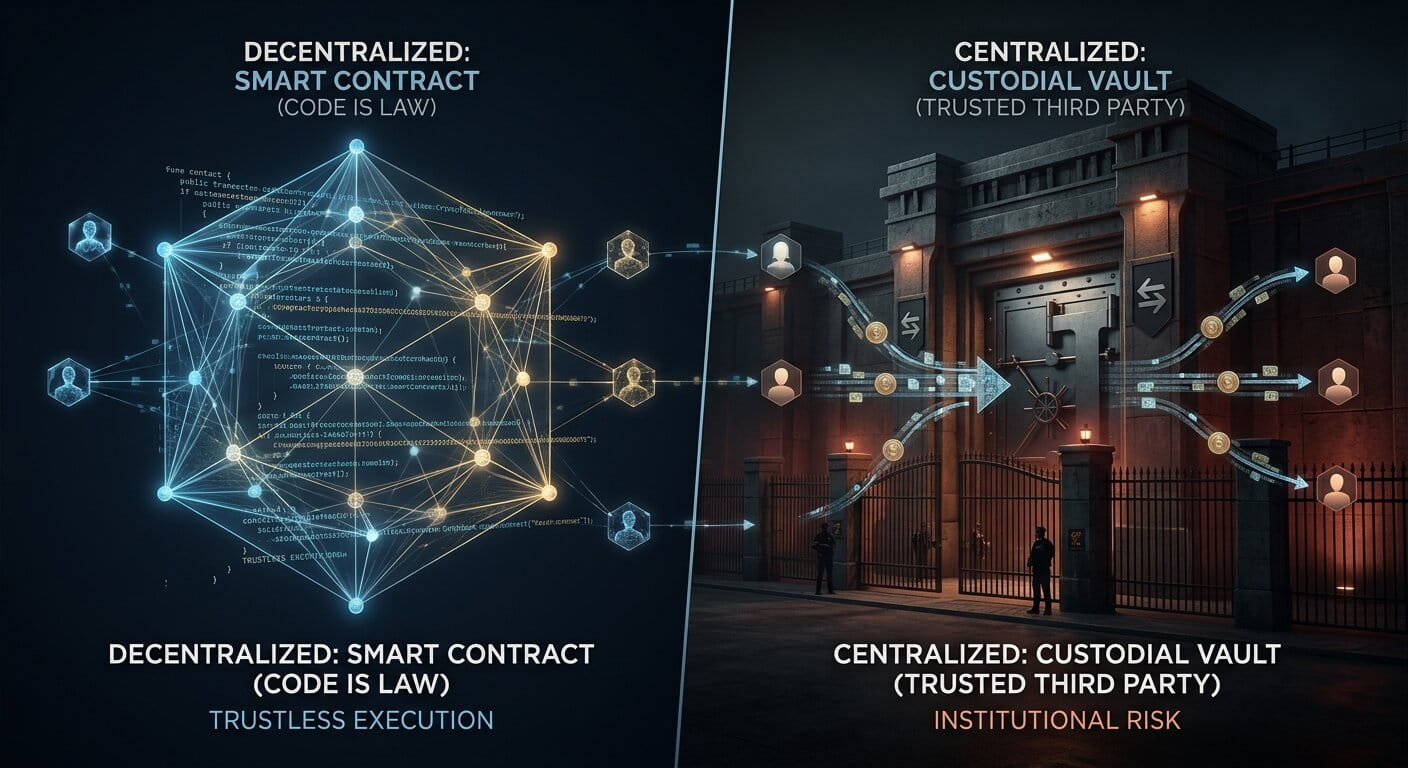

The OG Score is not an arbitrary number. Code audits and reputation signals can confirm technical correctness, but they do not measure intent, incentive alignment, governance quality, or market-structure risks. This score complements technical audits by standardizing off-code risk signals into a comparable rating. It is produced through a structured, transparent process designed to reduce manipulation and improve accountability.

Evidence standards: Before scoring, we rely on public, verifiable sources such as security audit reports, governance records, official documentation and statements, public repositories, and on-chain data. We do not accept private documents that cannot be independently verified.

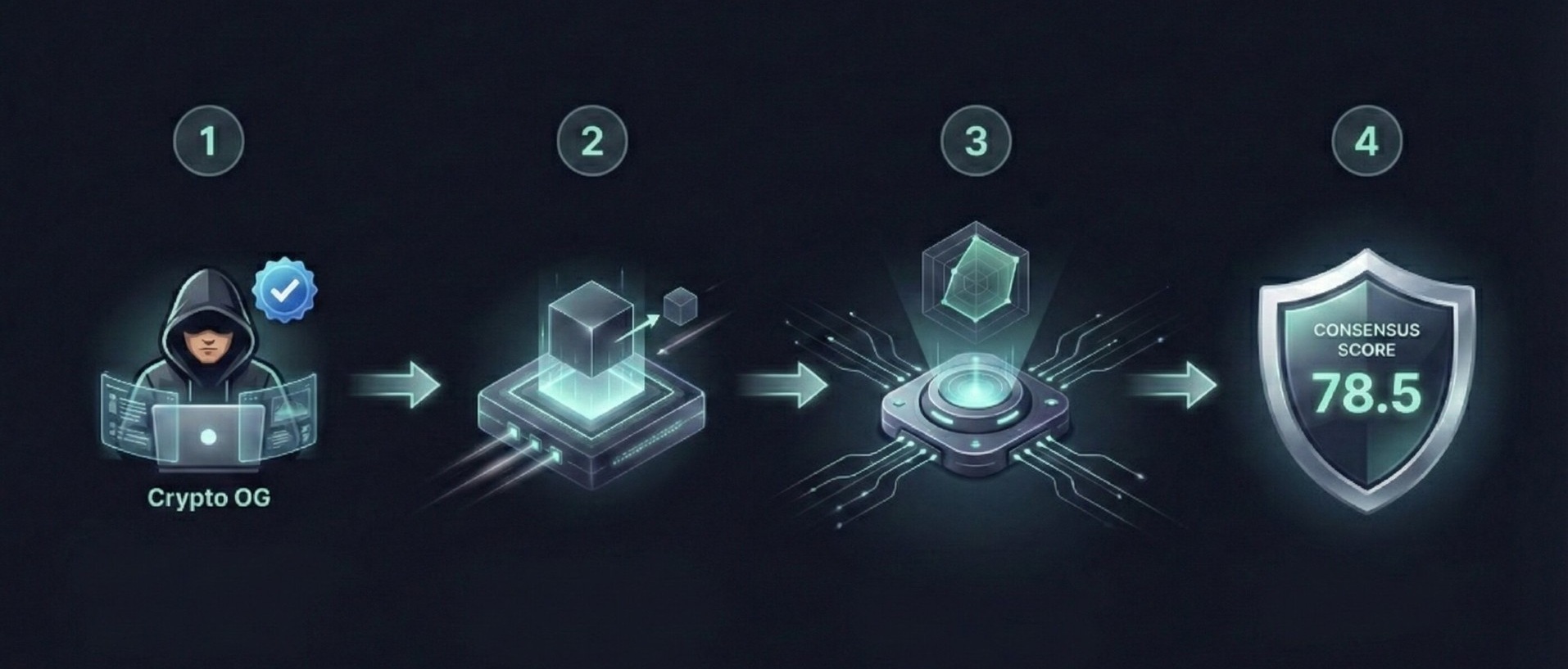

Calculation Workflow

-

1. Independent Audit: A Crypto OG conducts a social audit, rating the project on the six core metrics (1 to 100 scale) based on gathered evidence according to our methodology.

- 2. Permanent Record: The review is permanently recorded on the platform, displayed on the relevant coin page, and linked to the auditor’s profile to ensure accountability.

- 3. Metric Aggregation (The Radar): The platform first calculates the average score for each of the six metrics individually across all submitted reviews. This creates the "Coin Evaluation Metrics" radar chart, clearly visualizing the project's specific consensus strengths and weaknesses (e.g., strong Community but weak Tokenomics).

- 4. Final Score Consensus: The official OG Score shown in coin tables and on the coin page is the simple average of the total scores submitted by independent Crypto OGs, representing the final community consensus.

Beware of Limitations, although we have clear Methodology, Community Guidelines and a community cross-check mechanism to reduce these risks, social audits rely on public information and human judgment, therefore an audit may miss some of the warnings, or fail to catch red flags. The OG Score is a strong diagnostic tool , not a guarantee for future success of a project.

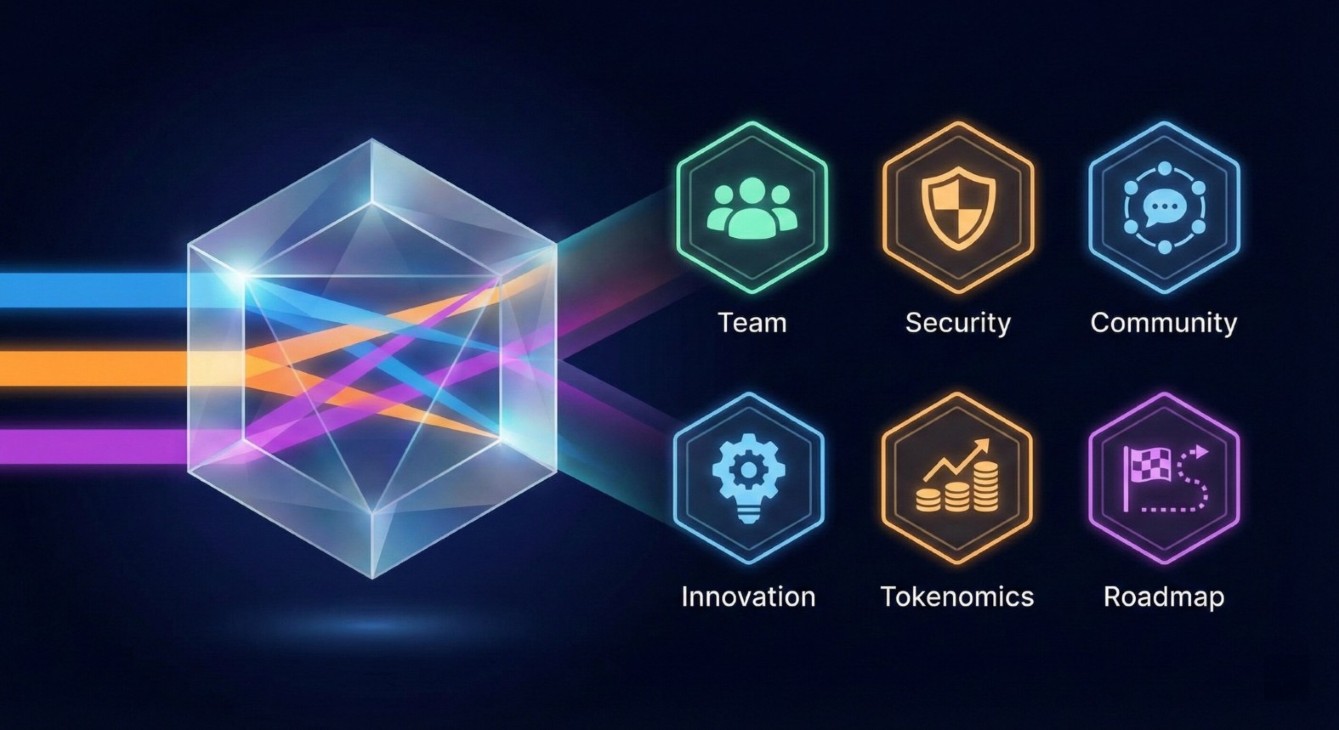

The 6 Core Metrics (From 3 Pillars)

We begin with three investigation lenses: team legitimacy, community authenticity, and promise versus reality. To calculate the OG Score, we convert these signals into six defined metrics. Here is how we distill our findings into the six core metrics shown on the dashboard:

- Team Identity > Team & Security.

(Key Security Checks, early scam signals, liquidity fairness, team identity, reputation and backers) - Community Health > Community.

(We separate real human engagement from bot farms that inflate holder counts and volume.) - Promise vs. Reality > Innovation, Tokenomics, & Roadmap

(We verify that a usable product exists, token distribution is fair, stated promises are being met, and the economic model is sustainable.)

1. Team: The Credibility Check

We evaluate the experience, history, and delivery capability of the builders. In an industry where anonymity is common, credibility must be established through action.

How to interpret: High scores suggest verifiable builders and consistent delivery; low scores suggest unclear identity or weak execution proof.

- Identity Signals: We look for inconsistencies. In an era where scammers can use the names of famous people, a LinkedIn profile isn't enough. We look for official account endorsements and verified sources. There are other ways we occasionally use to spot fake identities which we will cover later (link will be uploaded here)

- Development Consistency: We analyze public repositories (like GitHub) for consistent commit history, and if there is a healthy communication with the community.

- Backers and Resource Allocation: Does the team size and composition match the roadmap's ambition, are efforts being supported by backers?

2. Security: The Safety Assessment

This assessment goes beyond code audits to evaluate the overall safety of the protocol environment and the privileges held by the team. We may use third-party analytics and security tools to surface leads and patterns such as Bubblemaps, GoPlus, Defillama etc, but scores rely on verifiable primary sources and on-chain evidence, not tool outputs alone.

How to interpret: High scores suggest controlled privileges and safer liquidity; low scores suggest centralization or elevated exploit and rug-pull risk.

- Administrative Controls: We review the recency and scope of technical audits to ensure they match the deployed contract. Then we check for excessive permissions, honeypot behavior, freeze functions, unfair taxes, and similar risks through code audits.

- Contract Renouncement: For meme coins or anonymous teams, a renounced contract is often preferred to reduce rug pull risk but for complex DeFi protocols, admin control is not inherently negative if it is disclosed and constrained through timelocks and multisig wallets.

- Liquidity Safety: We prioritize locked DeFi liquidity over exchange listings. Centralized exchanges can delist a coin quickly, leaving holders trapped. A DeFi pool is often the only way out. We check if liquidity is high enough, is burned or locked on trusted third-party platforms and for how long. (UNCX, PinkSale, etc.)

3. Community: The Engagement Reality

We separate organic user interest from artificial noise. This metric measures the quality of discourse and the legitimacy of the user base. Social and on-chain activity can easily be manipulated by bots, so we cross-check multiple signals before scoring.

How to interpret: High scores suggest organic engagement and credible adoption; low scores suggest bots, censorship, or inflated metrics.

- Discourse Quality: We prioritize signals that are harder to curate or delete, such as public reply threads on X (Twitter), governance forums. We also review Discord and Telegram logs, but we treat them as lower-confidence sources because moderation can remove context.

- Response to Criticism: Does the team address difficult questions transparently, or do they ban users who seek clarity?

- Traffic Validation: We compare claimed user numbers against third-party traffic estimates like Semrush, Ahrefs.

- Decision Making: We assess how decentralized the Web3 project’s governance is, including whether the community can participate through decision-making tools such as Snapshot.org, and whether those votes can meaningfully influence major decisions.

- On-Chain Activity: We analyze active addresses rather than just wallet counts using Arkham and other chain explorers. Note: Even this data requires an experienced eye, as it can be manipulated by bots similar to social metrics.

4. Innovation: The Utility and Authenticity Test

This metric evaluates whether the project introduces a unique and practical solution with verifiable advantages over existing alternatives. We strip away marketing jargon to determine if the project has a legitimate reason to exist.

How to interpret: High scores suggest real differentiation and practical utility; low scores suggest copycat narratives or unverified claims.

- Differentiation: Is this a "fork of a fork" or is there verifiable new technology?

- Problem-Solution Fit: Does the whitepaper clearly explain a real user problem and a viable solution?

- Market Impact: Does the innovation have the potential for measurable real-world adoption beyond speculation?

5. Tokenomics: The Sustainability Check

This metric assesses the economic engine of the protocol to determine if it is designed for long term sustainability or short term extraction. Do multiple revenue streams actually work in practice? Is there a clear, verifiable method to pass value to token holders, or is the project mainly trying to raise the token price through burns?

How to interpret: High scores suggest sustainable incentives and healthier liquidity; low scores suggest inflation, unlock pressure, or weak utility.

- Valuation Gaps: We analyze the Fully Diluted Valuation (FDV) versus the current market cap to identify inflation risks.

- VC Exit Risk: We look for large discounted allocations given to early backers with near-term unlocks, which can create big and prolonged sell pressure.

- Liquidity Depth: Is there sufficient liquidity for users to enter and exit positions with minimal slippage?

- Staking and other Rewards: Where do they come from, inflation, protocol revenue, or the treasury? Would the system still function when incentives stop?

6. Roadmap: The Delivery Track Record

A roadmap is a promise; we measure the ability to keep it. This metric assesses transparency and the alignment between marketing claims and development reality.

How to interpret: High scores suggest clear milestones and consistent delivery; low scores suggest repeated delays or unverifiable progress.

- Milestone Verification: We compare completed milestones against actual product releases and deployments. It is not just about GitHub code; we check if the dApp is live and usable, and as good as promised!

- Realistic Timelines: Are the goals achievable given the team's resources, or are they perpetually delayed?

- Strategic Clarity: Is the roadmap detailed and specific, or does it rely on vague objectives like "Global Expansion"?

Metric Breakdown: Common Risk Patterns

Metric Breakdown: Common Risk Patterns

The diagnostic chart is designed to help readers identify tradeoffs, not to provide a pass or fail verdict. By looking at the shape of the diagnostic chart, you can identify various risk patterns.

Common Risk Patterns:

- High Community + Low Security: “The Over-Marketing Pattern”. Strong promotion and media presence can be valuable, but a low security score may indicate serious red flags.

- High Team + Low Roadmap: “The Execution Gap Pattern”. The team has a strong reputation and credibility, but they are failing to ship products on time or are pivoting away from their original vision.

- High Security + Low Community: “The Zombie Chain Pattern”. The protocol and its backing may be technically sound, but it lacks adoption or a genuine user base. This often occurs with “zombie chains” or abandoned projects, but it can also describe early-stage projects that have not yet built traction.

- High Innovation vs Low Tokenomics + Team: “The Paper Tiger Pattern". The project may have strong technology, but the economic model and inexperienced team and limited resources may create problems at delivery and hence increase risk for token holders.

The Gem Score: Early Stage Potential and Risks

You will notice a separate metric on our platform called the Gem Score which is also voted by Crypto OG. The Gem Score is calculated separately and is not included in the aggregate OG Score.

While the OG Score is a strict evaluation of safety and fundamentals, the Gem Score reflects potential*. It highlights how promising a project appears to Crypto OG, particularly when solid fundamentals align with a relatively low market cap.

- Purpose: To highlight early stage opportunities for research but also a high Gem Score indicates higher risks especially if it’s combined with a low OG Score!

*Disclaimer: Gem Score is subjective and represents the "growth potential" sentiment of the reviewer. It should never override a critical failure in Security or Tokenomics. This metric is speculative and experimental in nature. It reflects what seasoned eyes see, not financial or investment advice. Always DYOR (Do Your Own Research) before chasing any potential gem.

Integrity & Governance

Like every other metric to ensure the OG Score remains a genuine signal of legitimacy, we enforce strict protocols. Trust cannot be bought, and we do not negotiate scores. Here is how we maintain the quality and independence of our data.

1. Immutable Scores & Zero Tolerance

- No Pay-to-Play: No paid influence, no sponsored ratings. Projects that offer financial incentives or whitelist spots to manipulate or inflate their scores will be flagged immediately with a public notice.

- Permanency: Requests from teams to edit or delete their OG Scores are never accepted. Once a review is published by the auditor, neither the auditor nor the OGAudit team can edit or delete it. The auditor may submit a new re-evaluation review only six months after the first review. The crypto asset’s price is also permanently recorded in the review as of the time it was published.

2. Auditor Standards & Governance

To become a Crypto Social Auditor at OGAudit, individuals must meet specific criteria. These include at least 1,000 days of verifiable on-chain activity through a permanently linked EVM wallet on the Crypto OGs public profile, payment of a one-time burned registration fee, and agreement to the platform terms and Community Guidelines. We limit the number of active auditors to ensure quality over quantity. As of 11 February 2026, we have 19 Crypto OG who have submitted over 5,800 reviews.

- Reviewer Notes: After rating the OG Score items, Crypto OG must submit a brief, insightful review summarizing their findings and highlighting the project’s strengths and key red flags often with source links. When relevant, they may also include comparisons with competitors and constructive criticism, including potential areas for improvement.

- Rate Limits: To prevent low-effort content, auditors are limited to a maximum of 30 reviews per day.

- Alignment and Platform Incentives: We hold monthly webinars and AMA sessions to discuss security topics and support consistent application of the methodology across auditors. These live sessions also help with Sybil resistance by confirming that each new onboarded auditor is a real person. We reward contributors based on review quality, insightfulness, and volume, regardless of whether the conclusions are positive or negative. However, the primary incentive is reputation building, and auditors participate with that expectation.

- Badges: We also issue non-transferable (soulbound) NFT badges to eligible auditor wallets to recognize certificate event participations and milestones. (Post links will be uploaded here after distribution)..

3. Enforcement & Transparency

Reviewer history, methodology, platform terms, and Community Guidelines are public. We have zero tolerance for paid influence or manipulation. Auditors who publish AI-generated, spam, or copy-pasted reviews, or engage in other manipulative conduct that violates the Community Guidelines, will be permanently banned and cannot register again with the same wallet or email. Significant enforcement actions will be publicly documented in our Quarterly Transparency Reports, starting in Q2 2026 and continuing each quarter thereafter.

Want to Join Us? For full eligibility criteria and onboarding steps, please review our OG Onboarding Guide and Community Guidelines.

Always do your own research (DYOR) and avoid investing blindly based on any audit score in crypto. The scores mentioned in this article are provided by OGAudit Crypto Social Auditors (Crypto OG) and are intended to help identify areas where a project may be missing key elements and where it may stand out. These scores do not constitute legal or financial advice and should not be treated as such.

FAQ About the OG Score

What does the OG Score measure that code audits do not?

Code audits verify if a smart contract functions correctly. The OG Score measures human risk, economic sustainability, and the legitimacy of the team’s intent (factors that code audits typically miss).

Can a project pay to improve their OG Score?

No. We do not accept payment for scores. Any attempt to bribe auditors or manipulate the score results in a public flag and potential blacklisting. You can report any review at any time by clicking the three-dot icon below the review. We will also announce a whistleblower program to further strengthen our security measures.

How is bias minimized in the scoring?

To reduce manipulation and improve accountability, we use community flagging, reviewer attribution, and enforcement under the Community Guidelines. We also use advanced AI tools to quality-check reviews and detect potential policy and guideline violations. Each review includes a price stamp recorded at the time of submission to provide context and support public scrutiny of reviewer behavior. Verified violations, including paid reviews, result in enforcement actions up to permanent bans.

How many reviews are needed before an OG Score is displayed?

The OG Score is planned to be displayed only after at least 10 independent audits are submitted. However, until mainnet, we will display scores regardless of the number of reviews due to the limited number of Crypto OG.

Does a high OG Score guarantee a project is safe?

No. The OG Score helps standardize due diligence and reduce risk, but it cannot predict outcomes or guarantee safety. Always conduct your own research.

Does a low OG Score signal a project is a scam?

Sometimes it can, but a low OG Score does not necessarily mean a project is a scam or will perform poorly. It is designed to help you quickly scan and spot red flags, potential rug-pull signals, and other risk factors, so you can manage risk accordingly.

It is also important to read the auditor's comments. They explain the reasoning behind the scores and clearly highlight strengths and weaknesses based on experience and available evidence.