TL;DR: The OG Score for exchanges is a community rating built from independent audits by verified Crypto OGs. It scores six operational metrics (Usability, Insurance, Fees, Speed, Liquidity, Security) and measures what algorithms cannot: withdrawal reliability under stress, incident response honesty, whether insurance actually pays out, and whether declared volumes reflect reality. Reviews are permanent, time-stamped, and attributed to an on-chain identity. Built to surface risks that traffic-based rankings and paid listings systematically miss. Designed for users who prioritize fund safety over marketing metrics.

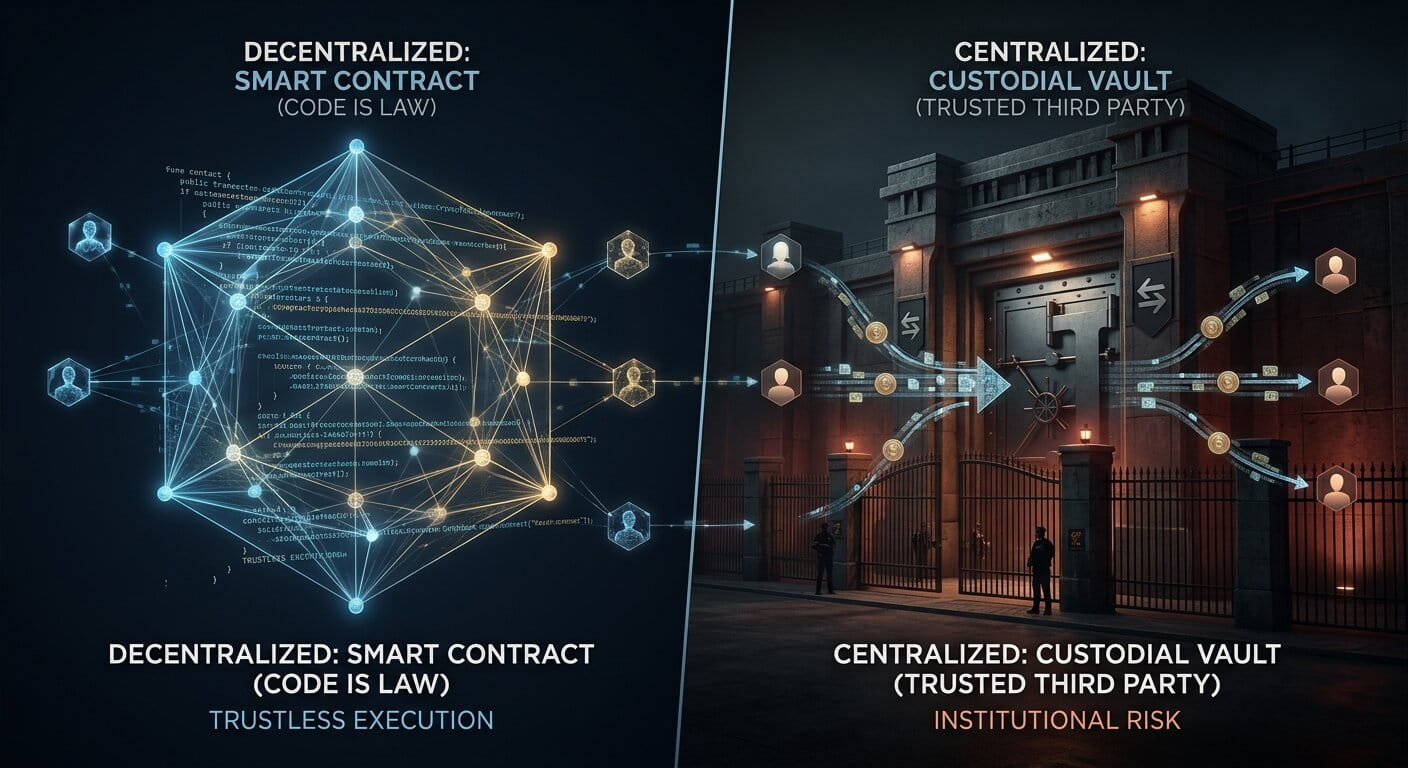

A centralized exchange is not a smart contract. You cannot audit it line by line. You can audit how it behaves. An exchange lives or dies on a handful of measurable things: whether withdrawals clear on the day they are requested, whether user funds are insured and against what, whether fees match the marketing page, and how the platform reacts when the market breaks.

OGAudit community rates exchanges the way experienced traders rate them in private: from the observable record. Six metrics, scored independently by verified Crypto OGs, averaged into a single public number. Nothing is paid, nothing is removable, and every reviewer is on-chain identifiable. Auditors may also connect their social media accounts to their profiles, adding another layer of transparency to the review process.

OG Score for Exchanges: Rated by the Experienced Crypto OGs, Not Algorithms

- What it is. A trust rating powered by the OGAudit Social Audit Methodology, applied to centralized exchanges.

- Who creates it. Verified Crypto OGs with permanently linked EVM wallets of at least 1,000 days of on-chain activity.

- How it is calculated. Each OG auditor scores six operational metrics individually. The public OG Score is the average of total scores across submitted reviews.

- How it is updated. Dynamically, every time a new audit is submitted. Reviews are never edited or removed; re-evaluations are allowed only after six months.

- What it measures. Operational reliability and user-fund safety: withdrawal behavior, insurance enforceability, fee honesty, liquidity depth, incident response.

How to Use OG Score in 30 Seconds

Three steps, in order, when you are evaluating whether to deposit funds on an exchange.

- 1. Check the overall score. A single number for quick triage. 30-50 is generally acceptable. Below 20 means read the reviewer notes before anything else. Thresholds derived from historical distribution of rated exchanges, not fixed cutoffs.

- 2. Check the weakest metric. The radar shape matters more than the average. A 75 overall with Insurance at 20 is a different risk than a 75 overall with flat metrics.

- 3. Read the reviewer notes. Every score is attributed and explained. Each review highlights strengths alongside weaknesses and red flags, and the written context tells you which issues affect your specific use case (spot trading, derivatives, long-term custody)

Why Centralized Exchanges Need a Different Framework Than Coins

Our Coin OG Score evaluates a project across dimensions like team, tokenomics, roadmap, innovation, security, and community. That framework assumes the thing being rated is a codebase and a team trying to build something. A centralized exchange is a different kind of institution. It is a custodian. It holds other people's money, and the questions worth asking about a custodian are operational, not developmental.

Unlike a development project, an exchange's quality depends on whether its operational infrastructure is robust enough to handle real-world stress, whether its withdrawal systems clear under load, and whether its leadership makes decisions that protect user funds when it matters most. Operational and governance risks are at least as important as technical ones.

Traditional audits such as a cybersecurity or a reserve audit, even good ones, cannot answer whether an exchange will execute orders and honor withdrawals during a panic, whether slippage on a six-figure market order during a flash crash will destroy a position, or whether an insurance fund is a genuine reserve rather than a marketing page.

These are the questions that matter for anyone depositing funds, and they live entirely outside the traditional audit layer. The exchange OG Score uses six metrics that map directly to what a user experiences: can I use it smoothly, are my funds protected, do fees match the claim, does the platform respond under load, is there enough depth for my size, and does the team handle incidents responsibly.

How the OGAudit Trust Score Differs From Algorithmic Ratings

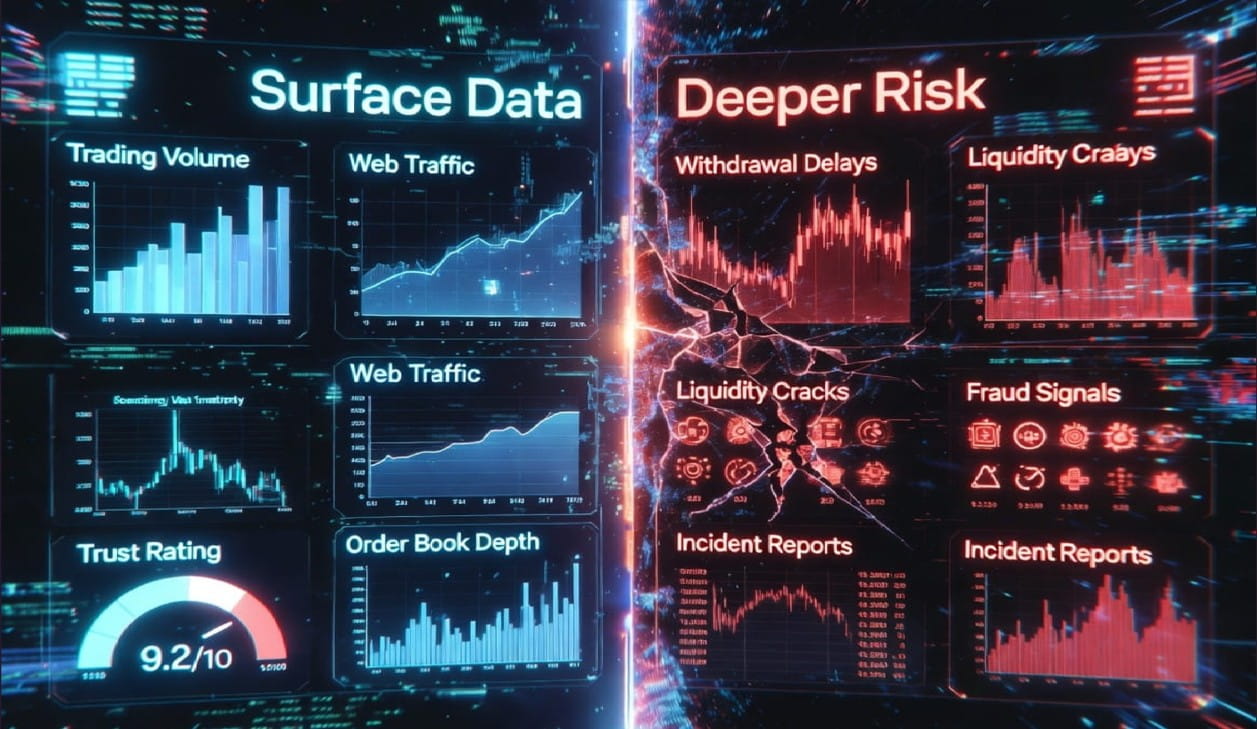

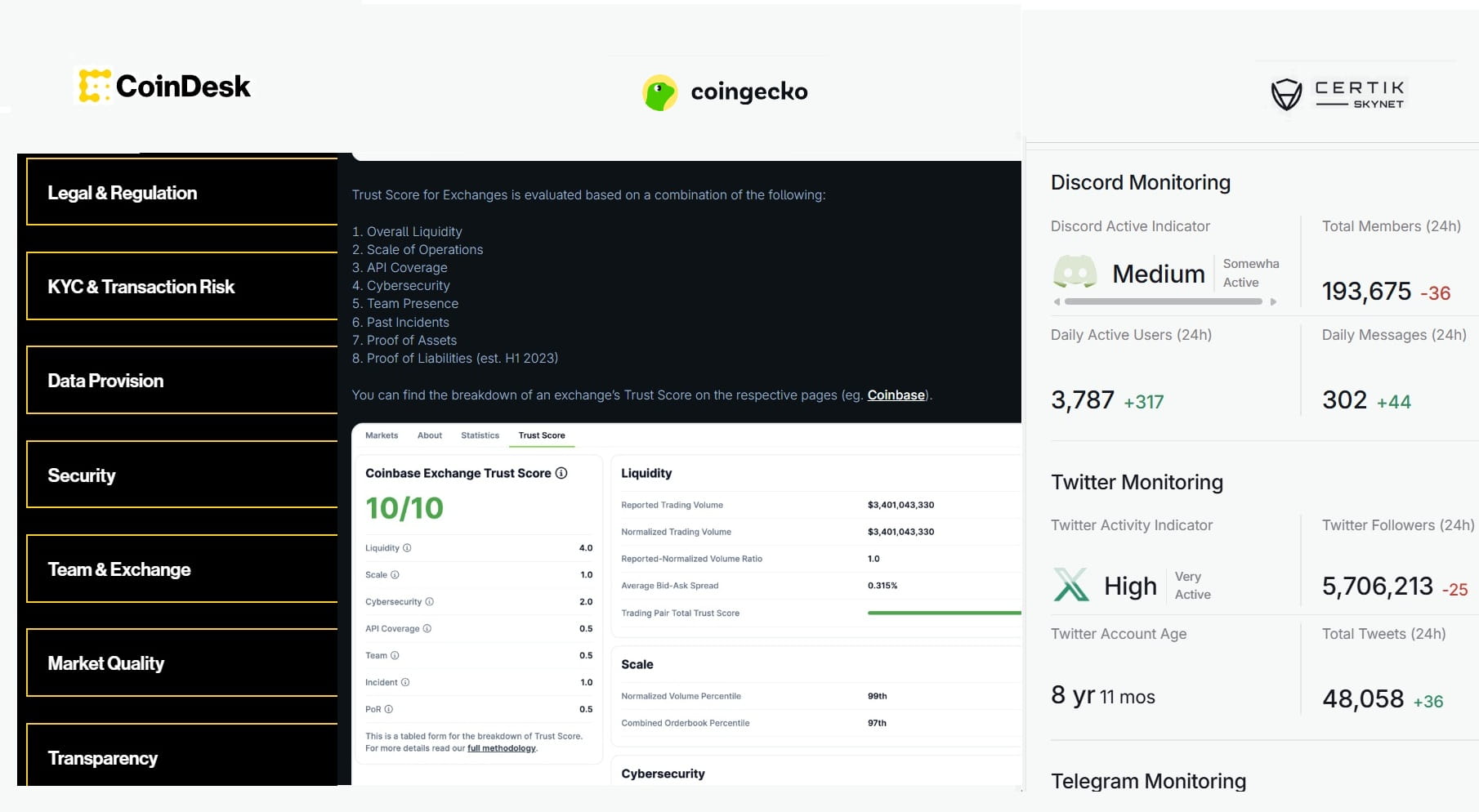

Most existing exchange ratings come from algorithms measuring what is easy to count. The CoinGecko Trust Score evaluates exchanges across seven components: normalized trading volume, order book depth, cybersecurity (assessed via Hacken), API coverage, team presence, scale of operations, and proof of reserves. It produces a score on a scale of 1 to 10.

The CoinDesk Exchange Benchmark assesses more than 100 qualitative and quantitative metrics across eight categories (market quality, security, regulation, KYC, transparency, data provision, exchange leadership, and negative events), assigning grades from AA to F. CertiK Skynet evaluates exchanges across six categories using on-chain data, code-level signals, and real-time sentiment analysis, with tier labels from AAA to F.

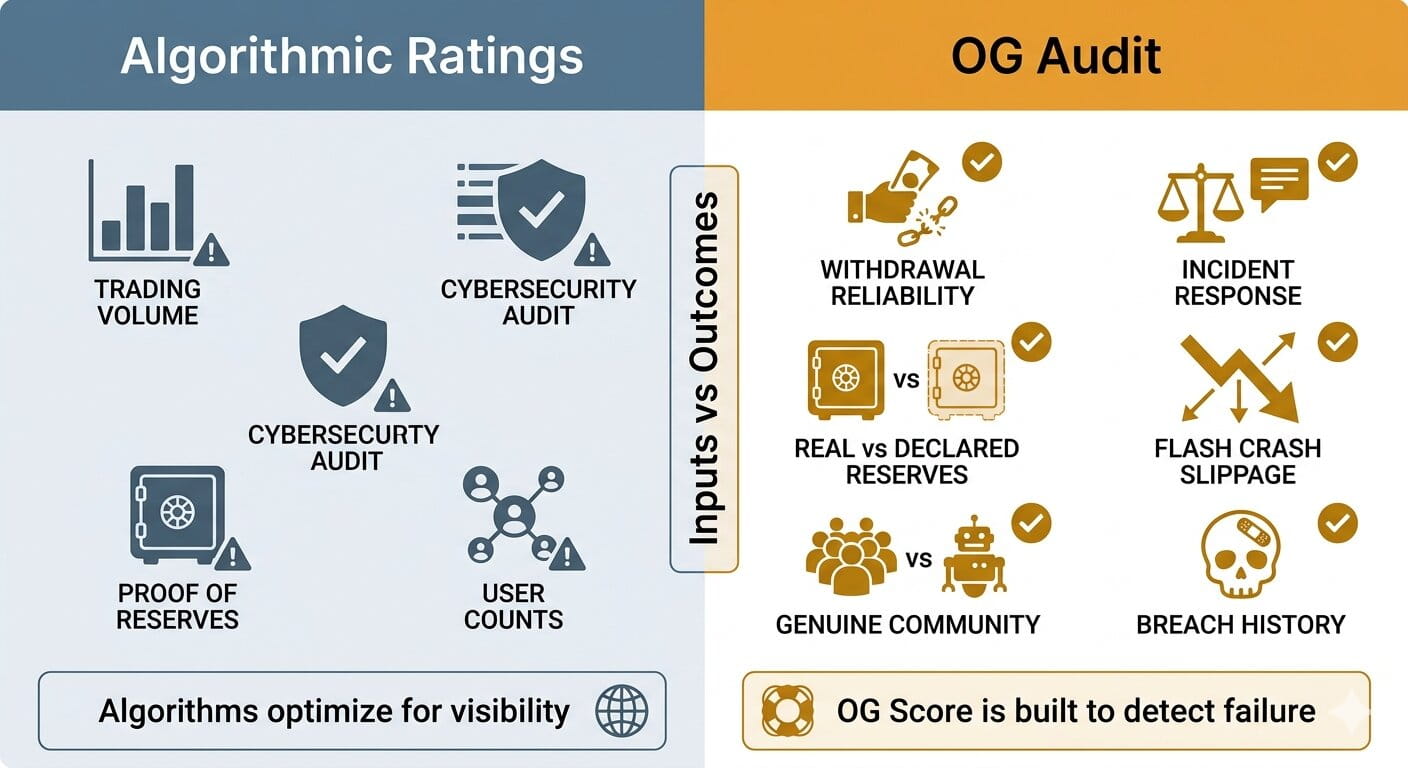

These systems are useful they measure inputs. The OG Score measures outcomes: our auditors verify and translate those outputs into a simplified format, add expert insights, and present them in a way that is practical for real-world decisions.

|

What algorithms measure |

What OG Score adds |

Why it matters |

|

Reported trading volume |

Actual withdrawal reliability under stress |

Volume can be wash-traded. Withdrawal failure during a crash is what costs users money. |

|

Order book spread at rest |

Slippage during real flash crashes |

Calm-market spread does not predict what a market order does at 3am during a 10%+ drop. |

|

Declared reserves (Proof of Reserves reports) |

Withdrawal accessibility under stress: whether declared and actual reserves align |

Declared reserves can mislead—what matters is surplus over liabilities when users withdraw. |

|

Web traffic estimates, declared user counts and social media follower figures |

Analysis separating real engagement from bots and paid activity |

Follower and visitor metrics are easy to inflate—verified OG insights reveal true platform health. |

|

Cybersecurity audits (system and code-level scans) |

Historical breach record, developer team quality, and post-incident response behavior |

A clean audit lowers known risks but doesn’t ensure safety—incident history matters more. |

|

Self-reported security features |

Breach history and post-incident behavior |

Feature lists are marketing. Incident response is evidence. |

What sets the OG Score apart is the flexibility and depth of experience behind it. The algorithmic systems above are systematic, but they miss what only a veteran trader on the ground sees: the red flag that has not yet made it into any report, the compensation program that excluded most claimants on a technicality, or the pattern of account issues that appears after users record large gains.

Declared volume, declared user numbers, and reserve figures all look clean on paper, and all of them are among the easiest metrics to manipulate. OG reviewers track real trading, withdrawal experiences, note platform responses to user complaints, and flag discrepancies between stated figures and observable behavior. Traditional rankings optimize for visibility. OG Score is built to detect failure.

Methodology in Practice: How the Exchange OG Score Is Calculated

The framework is applied continuously, not published as theory. As of May 06, 2026, the OG Score has been applied across 46 centralized exchanges through 260+ independent audits submitted by 32 verified Crypto OGs. See updated numbers on our exchange audits home page.

The methodology is reviewed quarterly and published alongside a transparency report.

The calculation is structured to reduce manipulation and give readers a score they can trace back to specific reviewers and specific evidence.

Evidence standards

Before scoring, OGs rely on publicly verifiable sources: official exchange documentation, Proof of Reserves reports, on-chain data for the exchange's known wallets (analyzed using tools such as Arkham, Dune Analytics, and CoinGlass for advanced tracking), and fee schedules from the platform's own pages.

Additional sources include incident disclosures, official and representative social media statements (which are often overlooked by traditional audits), valid customer complaints published on Trustpilot, Sikayetvar, and other social platforms, and third-party traffic and volume estimates. Private documents that cannot be independently verified are not accepted.

Calculation workflow

-

1. Independent audit. A Crypto OG rates each of the six metrics on a 1 to 100 scale using gathered evidence.

- 2. Permanent record. The review is written to the exchange page, linked with the OG's public profile, and time-stamped. Neither the reviewer nor OGAudit can edit or delete it afterward.

- 3. Metric aggregation (the radar). The platform averages each metric individually across all submitted reviews, producing the Exchange Evaluation Metrics radar chart that shows where consensus is strong and where it splits.

- 4. Final score consensus. The public OG Score is the simple average of total scores from all independent reviews. Unweighted aggregation; eligibility gating ensures only verified OGs are included.

Reviewer standards and Integrity Safeguards

-

Identity and eligibility. Each reviewer is linked to a permanent EVM wallet with a minimum of 1,000 days of on-chain history. A one-time burned registration fee applies. By registering, reviewers accept OGAudit's Terms of Use, Community Guidelines, and the Social Audit Methodology, ensuring unbiased and non-sponsored contributions.

- Review conduct. Written notes are required alongside numeric scores. Rate limit: 30 reviews per day. Each exchange can be reviewed once every six months per OG (same rule applies to re-evaluations)

- No pay-to-play. OGAudit does not accept payment for scores. Any attempt to influence reviews results in public flagging of the exchange and permanent bans for involved accounts.

- Permanency and transparency. Reviews and scores cannot be edited or removed. Each submission is timestamped and attributed. Significant enforcement actions are disclosed in quarterly transparency reports.

- Enforcement. AI-generated, copy-pasted, or non-genuine reviews are not incentivized and are actively penalized. Repeated violations result in permanent account bans, rendering the associated wallet unusable on the platform.

The Six OG Score Metrics for Exchanges for a Reliable Evaluation

Each metric scores a specific operational dimension. A high score in one does not compensate for a failure in another. A platform can be the fastest and cheapest in the market and still score poorly overall if it cannot protect funds or handle stress.

1. Usability: Trading Interface and User Experience

Reflects user experience, platform navigation, tool accessibility, and compatibility across devices.

How to evaluate. High scores suggest a clean interface that does not create errors under time pressure. Low scores suggest friction in basic flows: confusing order placement, laggy mobile app, buried withdrawal options, or inconsistent behavior between web and app.

- Onboarding and navigation. Can a new user complete signup, verification, and a first trade without searching for help articles.

- Order placement. Are order types clearly labeled, are fees visible before confirmation, does the interface warn on leverage.

- Cross-device parity. Does the mobile app match the web experience, or do important features only work in one place.

- Compliance friction. Repeated KYC requests after initial verification, or "additional documents required" loops that never resolve, are indicators of a broken compliance system, not a security feature. A freeze appeal process that is undocumented or lacks published resolution times is a red flag regardless of how good the UI looks.

- Jurisdiction mismatch. If a user is not legally permitted to use a platform in their country, account restriction or freeze is a predictable outcome, not an edge case. This is something users must verify for themselves based on their own location and circumstances; OGAudit flags known geo-restrictions in individual exchange reviews where documented, but cannot assess every user's jurisdiction.

- Red flags. Hidden withdrawal paths, inconsistent interfaces, frequent app crashes, slow response during volatile sessions.

2. Insurance: Does the Exchange Actually Protect Your Funds

Assesses whether user funds are covered by 3rd party or internal insurance fund, the credibility of the provider, and how much of the protection is an enforceable commitment versus marketing language.

How to evaluate. Insurance does not replace solvency or custody safety. It is a last-resort mechanism, not a primary protection layer. A high score requires a named fund with a published balance, clear reimbursement terms, and a track record of honoring them. Low scores flag vague promises, undisclosed balances, or coverage that failed during real incidents.

- Fund existence and size. Is there a named fund with a verifiable on-chain or audited balance.

- Reserve composition. Self-issued token concentration above 30-50% of reserves is a structural risk signal, the pattern FTX established with FTT. An exchange backing its reserve with an asset it prints is not solvent; it is circular.

- Customer asset treatment. Terms of service allowing the platform to "use" or "deploy" customer assets in any context are a soft rehypothecation flag. This is a legal commitment, not a UI detail.

- Coverage terms. What events are covered. What is explicitly excluded.

- Historical payouts. When an incident occurred, were users actually reimbursed, or were some excluded on technicalities.

- Third-party coverage. Is there independent insurance underwriting beyond the platform's own reserve. "Insured" without a named underwriter is marketing.

3. Fees: Hidden Costs and Real Trading Expenses

Evaluates trading fees, withdrawal fees, and the real cost of common actions after discounts and hidden charges are factored in. Evaluating fees in isolation misses the larger picture: how an exchange generates revenue is itself a risk signal.

How to evaluate.

- Spot and derivatives fees. Maker and taker rates across the main products.

- Withdrawal fees. Per-asset, compared against the network fee the exchange actually pays.

- Spread markup. Zero-fee trading often shifts cost into the spread. A platform with no visible fees but consistently worse execution is not cheaper.

- Discount structure. Native token discounts, VIP tiers, fee rebates. Are they accessible to typical users or only to high-volume traders.

- Revenue model signals. Exchanges running proprietary trading desks on their own order books put the house on the other side of the trade. Above-market APY yield products without a transparent revenue source are a different version of the same problem, Celsius and BlockFi used yield products to fund operations until the underlying lending positions collapsed.

- Red flags. Zero-fee marketing with wide spreads, withdrawal fees several times higher than network cost, tier structures complex enough that the effective rate is impossible to predict.

4. Speed: Withdrawal Times and Platform Performance Under Stress

Measures deposit and withdrawal speeds and platform responsiveness under load. The most manipulated metric in exchange marketing, and among the easiest to verify independently.

How to evaluate.

- Withdrawal timing. From request to on-chain broadcast. Stated window versus OG reviewer observation.

- Order execution under stress. Does the matching engine keep up when volume spikes, or do users see failed orders and bad fills.

- Uptime during volatility. The test is not a normal weekday. Reliability matters most during market-moving events such as unexpected BOJ or Fed announcements. Maintenance windows timed with major market events are an operational red flag, not a coincidence.

- Red flags. Withdrawals stuck in "processing" for days, customer support silence during incidents.

5. Liquidity: Order Book Depth and Volume Authenticity

Indicates order book depth, real trade volume, and the ability to handle large orders without moving price. Also where volume inflation, wash trading, and self-reporting bias show up.

How to evaluate.

- Order book depth. A practical benchmark: if a mid-sized order ($1K–$10K on major pairs, $100–$1K on low-cap assets) moves price beyond expected slippage ranges, reported liquidity is overstated. Sudden spread widening or adverse price movement immediately after order placement may indicate non-organic liquidity or latency-based execution disadvantages, exposing traders to unnecessary slippage.

- Volume credibility. We compare aggregated volume against the exchange’s reported figures and, where possible, on-chain inflow data where independently verifiable datasets are available. Artificial volume has become commoditized through retail-facing wash-trading services, making self-reported figures unreliable on their own. Large discrepancies between reported and independently observed volume are treated as indicators of inflated or non-organic activity.

- Spread quality. Artificially tight spreads with low fill rates indicate non-organic liquidity. Wide and erratic spreads point to thin organic books.

- Listing behavior. High listing velocity combined with frequent delistings signals a fee-extraction model rather than asset curation.

- Red flags. Volume inconsistent with on-chain flow, order books thinning visibly during off-peak sessions, wash-trading patterns flagged by external tools.

6. Security: Breach History, Proof of Reserves, Incident Response

Examines security infrastructure, breach history, fund protection, and post-incident behavior.

How to evaluate. The dominant exchange failure mode in 2024–2026 has shifted from hot-wallet hacks to account-level compromise: phishing, SIM swaps, API key leaks, credential stuffing. The exchange's job is to make individual account compromise hard even when the user makes a mistake.

- Breach history. Both the events and the response. A single well-handled incident can score higher than a clean sheet at a platform never stress-tested. The 2024 WazirX case is instructive: the breach triggered the incident, but the 16-month fund freeze and rejected socialization-of-losses proposal were the operational failure. This is one documented case; individual exchange outcomes vary.

- Proof of Reserves. Assets-only PoR is insufficient, an exchange holding $5B in assets against $6B in liabilities passes an assets-only audit and is insolvent. Liabilities-included, Merkle-tree-attested proofs are the meaningful standard.

- Access controls. Hardware-key 2FA should be offered. SMS 2FA still better than not having any other extra security measure but it opens the SIM-swap attack surface. Withdrawal address whitelisting with enforced 24–48 hour cooldowns for new withdraw addresses, anti-phishing codes on outbound email, and auditable device/IP session logs are the baseline.

- Security testing. A public bug bounty program with disclosed payout history signals adversarial review. No bounty means no external incentive to find the next exploit before attackers do.

- Incident response. If there was an incident was disclosure fast and honest. Were users compensated fairly or excluded on narrow terms. Insurance fund size, draw history, and composition belong here, especially for derivatives-heavy exchanges where liquidation systems depend on it. An exchange that refuses to publish this is hiding it.

- Red flags. Repeated incidents without systemic fixes, reserve proofs excluding liabilities, compensation programs reaching only a fraction of affected users, regulatory fines for market conduct or AML failures.

Reading the Radar Chart: Common Exchange Risk Patterns

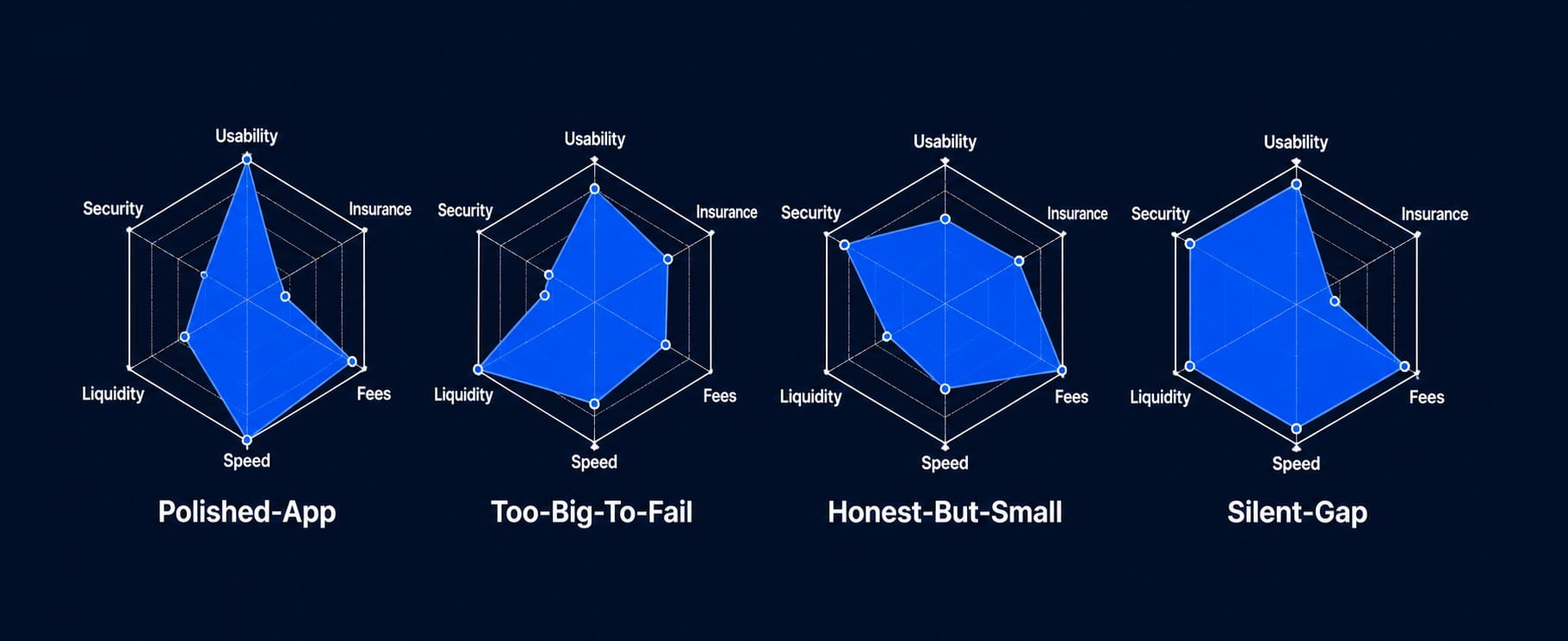

The radar chart built from the six metrics is useful beyond the raw average. The shape tells you what kind of risk you are looking at. These are the patterns OG reviewers see most often:

- High Usability and Speed, Low Insurance and Security. The polished-app pattern. Pleasant to use and fast on execution, but back-end protection is thin. Common with newer retail-focused exchanges chasing growth before investing in custody infrastructure.

- High Liquidity, Low Security. The too-big-to-fail pattern. Dominant market share masks governance, compliance, or operational weaknesses. Concentration risk becomes the user's problem during an incident.

- High Fees transparency, Low Liquidity. The honest-but-small pattern. Clear pricing, thin books. Acceptable for small orders, dangerous for large ones.

- High Security, Low Usability. The institutional pattern. Strong custody, weak retail experience. Often the right choice for size, the wrong choice for active trading.

- High across the board, Low Insurance. The silent-gap pattern. Everything works well until something does not, and reimbursement terms turn out to be narrower than the marketing suggested.

Honest Limitations: Getting Better Every Day

OG Score is a diagnostic tool, not a guarantee. It reflects real-world observations from active Crypto OGs who trade on these platforms, track on-chain flows, and often detect risks before automated systems do. This human signal is directional, not predictive.

The score surfaces observable risk patterns and reviewer consensus. It does not forecast outcomes. An exchange that scores well today can fail tomorrow due to new attack vectors, regulatory action, or hidden liabilities. Treat it as one input alongside your own due diligence on custody, jurisdiction, and asset exposure. Past security measures and team transparency do not guarantee future safety. Any centralized exchange can be hacked, and you may lose your funds. The model evaluates historical and operational resilience, not future risk elimination. Custody risk on any centralized platform remains the user's responsibility.

OGAudit is a learning system. The methodology evolves continuously to adapt to new forms of manipulation and failure patterns. As the ecosystem changes, so does the way risk is detected and interpreted.

Because the community consists of active builders, traders, and analysts, credible warnings about weakening or compromised platforms often appear here before they are reflected in aggregated data or public reports. Being connected to this layer means seeing early signals before they become widely visible.

How to Compare Crypto Exchanges Using OG Score

Every exchange rated on OGAudit carries a live OG Score, a radar chart across the six metrics, and the full history of permanent reviewer notes. Browse the current rankings to see how the platforms you use compare, or apply to become a verified Crypto OG and contribute your own reviews. Learn from real-world exchange failures and red flags. 7 real-world scenarios show what to watch for when choosing a crypto exchange and what to avoid. See our Crypto Exchange Safety Checklist article to understand how to identify a reliable exchange.

Also:

- Browse the full list of rated exchanges with live OG Scores.

- Read the Crypto Social Audit methodology for the framework behind every score on OGAudit.

- Review the Coin OG Score methodology for how crypto projects are evaluated.

- Register now and Apply for OG onboarding if you meet the wallet-age (1,000 days-old) and contribution standards.

About the author:

Kripto Raptor is the Chief OG at OGAudit.com and an independent Web3 researcher, blockchain analyst, and entrepreneur. Active in crypto since 2016 and working full-time in the industry since 2020, he specializes in evaluating Web3 and fintech projects through security analysis, community behavior, and market dynamics. At OGAudit, he publishes data-driven research, crypto social audit reviews, and in-depth project evaluations focused on transparency, risk assessment, and real-world performance.

Always do your own research (DYOR) before using any crypto exchange. The OG Score and reviewer observations in this article are provided by verified Crypto OGs and do not constitute legal, financial, or investment advice. OGAudit does not endorse or approve any exchange.

Frequently Asked Questions about OGAudit ratings and OG Score Methodology

What does the exchange OG Score measure that other audits do not?

Cybersecurity and reserve audits verify system integrity and a custodian's declared financial structure. They do not assess the real intent of the team, the quality of incident response when things go wrong, or the patterns of exaggerated marketing claims that diverge from user experience.

The OG Score measures what matters operationally: withdrawal reliability under stress, actual insurance and reserve enforceability, fee honesty, real liquidity depth, and how a platform treats its users when an incident occurs. These are outcomes, and they live outside any audit report.

How is OG Score (for exchanges) different from CertiK Skynet or CoinGecko Trust Score?

CertiK Skynet and CoinGecko Trust Score are algorithmic systems that draw on on-chain data, volume figures, cybersecurity scans, and community sentiment signals.

OG Score adds something algorithms cannot replicate: direct human observation from verified reviewers who have actually used these platforms under real conditions. That means actual withdrawal behavior during market crises, the real fairness of incident compensation programs, and whether stated insurance or reserve terms hold up in practice. Algorithms measure inputs. OG Score measures outcomes.

Can an exchange pay to improve its OG Score?

No. Payment attempts result in public flags and permanent bans for any involved reviewer accounts. Every review is attributed to an on-chain identifiable Crypto OG and is permanent.

How many reviews are needed before an OG Score is displayed?

The planned threshold is 10 independent audits per exchange. Until the Crypto OG community scales past 100+ active reviewers, scores are displayed with fewer audits so users are not left without signal.

Does a high OG Score mean an exchange is safe to use?

No score guarantees safety. A high score reflects strong observable behavior across the six metrics at the time of review. It does not predict future incidents or regulatory events. Treat it as one input. The final decision is always yours.

Does a low OG Score mean an exchange is a scam?

Not necessarily. Low scores flag specific weaknesses: poor insurance or reserve transparency, thin liquidity, slow withdrawals, weak security posture. Read the reviewer notes to understand which metric is dragging the score and whether it affects your specific use case.